Researchers from Tokyo Metropolitan University have enhanced "super-resolution" machine learning techniques to study phase transitions. They identified key features of how large arrays of interacting particles behave at different temperatures by simulating tiny arrays before using a convolutional neural network to generate a good estimate of what a larger array would look like using correlation configurations. The massive saving in computational cost may realize unique ways of understanding how materials behave.

We are surrounded by different states or phases of matter, i.e. gases, liquids, and solids. The study of phase transitions, how one phase transforms into another, lies at the heart of our understanding of matter in the universe, and remains a hot topic for physicists. In particular, the idea of universality, in which wildly different materials behave in similar ways thanks to a few shared features, is a powerful one. That's why physicists study model systems, often simple grids of particles on an array that interact via simple rules. These models distill the essence of the common physics shared by materials and, amazingly, still exhibit many of the properties of real materials, like phase transitions. Due to their elegant simplicity, these rules can be encoded into simulations that tell us what materials look like under different conditions.

However, like all simulations, the trouble starts when we want to look at lots of particles at the same time. The computation time required becomes particularly prohibitive near phase transitions, where dynamics slows down, and the correlation length, a measure of how the state of one atom relates to the state of another some distance away, grows larger and larger. This is a real dilemma if we want to apply these findings to the real world: real materials generally always contain many more orders of magnitude of atoms and molecules than simulated matter.

That's why a team led by Professors Yutaka Okabe and Hiroyuki Mori of Tokyo Metropolitan University, in collaboration with researchers in Shibaura Institute of Technology and Bioinformatics Institute of Singapore, have been studying how to reliably extrapolate smaller simulations to larger ones using a concept known as an inverse renormalization group (RG). The renormalization group is a fundamental concept in the understanding of phase transitions and led Wilson to be awarded the 1982 Nobel Prize in Physics. Recently, the field met a powerful ally in convolutional neural networks (CNN), the same machine learning tool helping computer vision identify objects and decipher handwriting. The idea would be to give an algorithm the state of a small array of particles and get it to estimate what a larger array would look like. There is a strong analogy to the idea of super-resolution images, where blocky, pixelated images are used to generate smoother images at a higher resolution.

The team has been looking at how this is applied to spin models of matter, where particles interact with other nearby particles via the direction of their spins. Previous attempts have particularly struggled to apply this to systems at temperatures above a phase transition, where configurations tend to look more random. Now, instead of using spin configurations i.e. simple snapshots of which direction the particle spins are pointing, they considered correlation configurations, where each particle is characterized by how similar its own spin is to that of other particles, specifically those which are very far away. It turns out correlation configurations contain more subtle queues about how particles are arranged, particularly at higher temperatures.

Like all machine learning techniques, the key is to be able to generate a reliable training set. The team developed a new algorithm called the block-cluster transformation for correlation configurations to reduce these down to smaller patterns. Applying an improved estimator technique to both the original and reduced patterns, they had pairs of configurations of different size based on the same information. All that's left is to train the CNN to convert the small patterns to larger ones.

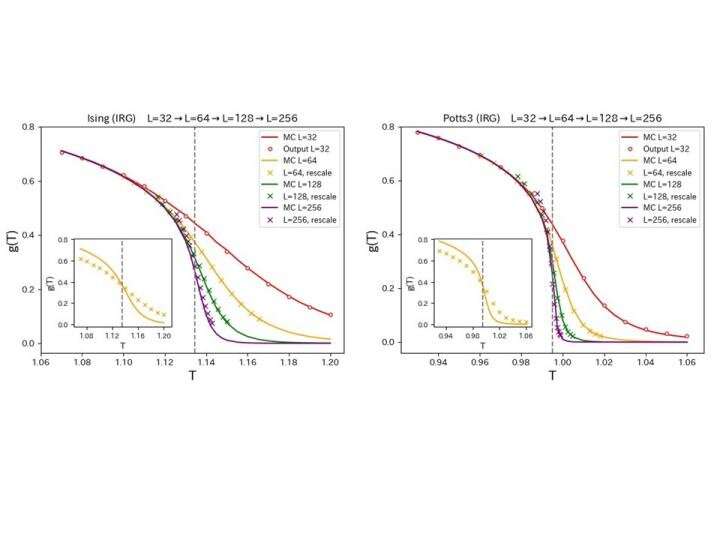

The group considered two systems, the 2D Ising model and the three-state Potts model, both key benchmarks for studies of condensed matter. For both, they found that their CNN could use a simulation of a very small array of points to reproduce how a measure of the correlation g(T) changed across a phase transition point in much larger systems. Comparing with direct simulations of larger systems, the same trends were reproduced for both systems, combined with a simple temperature rescaling based on data at an arbitrary system size.

A successful implementation of inverse RG transformations promises to give scientists a glimpse of previously inaccessible system sizes, and help physicists understand the larger scale features of materials. The team now hopes to apply their method to other models which can map more complex features such as a continuous range of spins, as well as the study of quantum systems.

Explore further

Provided by Tokyo Metropolitan University

Citation: New take on machine learning helps us 'scale up' phase transitions (2021, May 31) retrieved 31 May 2021 from https://ift.tt/34Bb7at

This document is subject to copyright. Apart from any fair dealing for the purpose of private study or research, no part may be reproduced without the written permission. The content is provided for information purposes only.

"machine" - Google News

May 31, 2021 at 06:50PM

https://ift.tt/34Bb7at

New take on machine learning helps us 'scale up' phase transitions - Phys.org

"machine" - Google News

https://ift.tt/2VUJ7uS

https://ift.tt/2SvsFPt

Bagikan Berita Ini

0 Response to "New take on machine learning helps us 'scale up' phase transitions - Phys.org"

Post a Comment